Difference between revisions of "Shortest path"

| Line 1: | Line 1: | ||

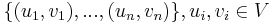

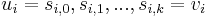

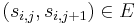

| − | The '''shortest paths''' problem is one of the most fundamental problems in [[graph theory]]. Given a directed graph <math>G = (V,E)</math>, possibly weighted, and a set of pairs of vertices <math>\{(u_1,v_1), ..., (u_n,v_n)\}, u_i, v_i \in V</math>, the problem is to compute, for each <math>i</math>, a path in <math>G</math> from <math>u_i</math> to <math>v_i</math> (a list of vertices <math>u_i = s_{i,0}, s_{i,1}, ..., s_{i,k} = v_i</math> such that for all <math>0 \leq j < k</math>, <math>(s_{i,j},s_{i,j+1}) \in E</math>) such that no other path in <math>G</math> from <math>u_i</math> to <math>v_i</math> has a lower total weight. | + | The '''shortest paths''' problem is one of the most fundamental problems in [[graph theory]]. Given a directed graph <math>G = (V,E)</math>, possibly weighted, and a set of pairs of vertices <math>\{(u_1,v_1), ..., (u_n,v_n)\}, u_i, v_i \in V</math>, the problem is to compute, for each <math>i</math>, a '''simple''' path in <math>G</math> from <math>u_i</math> to <math>v_i</math> (a list of vertices <math>u_i = s_{i,0}, s_{i,1}, ..., s_{i,k} = v_i</math> such that for all <math>0 \leq j < k</math>, <math>(s_{i,j},s_{i,j+1}) \in E</math>) such that no other simple path in <math>G</math> from <math>u_i</math> to <math>v_i</math> has a lower total weight. |

Shortest paths in undirected graphs can be computed by replacing each undirected edge with two arcs of the same weight, one going in each direction, to obtain a directed graph. | Shortest paths in undirected graphs can be computed by replacing each undirected edge with two arcs of the same weight, one going in each direction, to obtain a directed graph. | ||

| Line 14: | Line 14: | ||

===Relaxation=== | ===Relaxation=== | ||

There are theoretically many ways to refine overestimates but a specific way, known as '''relaxation''', is used in all the algorithms discussed in this article. Relaxation can take place when three conditions are met: | There are theoretically many ways to refine overestimates but a specific way, known as '''relaxation''', is used in all the algorithms discussed in this article. Relaxation can take place when three conditions are met: | ||

| − | # The currently best overestimate for the distance from some vertex <math>i</math> to some vertex <math> | + | # The currently best overestimate for the distance from some vertex <math>i</math> to some vertex <math>k</math> is <math>d_1</math>; |

| − | # The currently best overestimate for the distance from <math> | + | # The currently best overestimate for the distance from <math>k</math> to some vertex <math>j</math> is <math>d_2</math>; |

| − | # The currently best overestimate for the distance from <math>i</math> to <math> | + | # The currently best overestimate for the distance from <math>i</math> to <math>j</math> is greater than <math>d_1+d_2</math>. (This includes the case in which it is infinite.) |

| − | Relaxation refines the best overestimate for the distance from <math>i</math> to <math> | + | Relaxation refines the best overestimate for the distance from <math>i</math> to <math>j</math> by setting it to <math>d_1+d_2</math>, which is better than its current value. |

| + | |||

| + | '''Theorem''': Relaxation will never strictly underestimate the distance from <math>i</math> to <math>j</math>. | ||

| + | |||

| + | '''Proof''': Concatenating the shortest paths from <math>i</math> to <math>k</math> and <math>k</math> to <math>j</math> gives a path from <math>i</math> to <math>j</math> with length less than or equal to <math>d_1+d_2</math>. Therefore <math>d_1+d_2</math> is an overestimate. | ||

| + | |||

| + | '''Theorem''': In a graph with no cycles of negative weight, relaxation is impossible when all distances are correctly known. | ||

| + | |||

| + | '''Proof''': Using the variables defined above, this implies that the distance from <math>i</math> to <math>j</math> is in fact greater than the sum of the distances from <math>i</math> to <math>k</math> and <math>k</math> to <math>j</math>, which is impossible, as we can merely concatenate shortest paths from <math>i</math> to <math>k</math> and <math>k</math> to <math>j</math> to obtain a path from <math>i</math> to <math>j</math> of <math>d_1+d_2</math>, which is less. | ||

| + | |||

| + | '''Theorem''': | ||

==Single-source shortest paths== | ==Single-source shortest paths== | ||

Revision as of 20:53, 15 November 2010

The shortest paths problem is one of the most fundamental problems in graph theory. Given a directed graph  , possibly weighted, and a set of pairs of vertices

, possibly weighted, and a set of pairs of vertices  , the problem is to compute, for each

, the problem is to compute, for each  , a simple path in

, a simple path in  from

from  to

to  (a list of vertices

(a list of vertices  such that for all

such that for all  ,

,  ) such that no other simple path in

) such that no other simple path in  from

from  to

to  has a lower total weight.

has a lower total weight.

Shortest paths in undirected graphs can be computed by replacing each undirected edge with two arcs of the same weight, one going in each direction, to obtain a directed graph.

Contents

Variations

Three variations of the shortest path algorithm exist, and they are discussed in the following sections.

- In the single-pair shortest path problem, there is only one pair

in the problem set. In other words the shortest path is desired between a single pair of vertices.

in the problem set. In other words the shortest path is desired between a single pair of vertices. - In the single-source shortest paths problem, the problem set is of the form

. One vertex,

. One vertex,  , is designated the source, and we wish to find the shortest paths from the source to all other vertices. (To solve the analogous single-destination shortest paths problem, we merely reverse the directions of all edges, which reduces it to single-source.)

, is designated the source, and we wish to find the shortest paths from the source to all other vertices. (To solve the analogous single-destination shortest paths problem, we merely reverse the directions of all edges, which reduces it to single-source.) - In the all-pairs shortest paths problem, the problem set is

; that is, we wish to know the shortest paths from every vertex to every other vertex.

; that is, we wish to know the shortest paths from every vertex to every other vertex.

Approach

All the shortest paths algorithms discussed in this article have the same basic approach. At their core, they compute not the shortest paths themselves, but the distances. Using information computed in order to compute the distances, one can easily then reconstruct the paths themselves. They begin with the knowledge that the distance from any vertex to itself is zero, and they overestimate all other distances they need. (By this it is meant that they find a number  for each pair

for each pair  under consideration such that the distance from

under consideration such that the distance from  to

to  is less than or equal to

is less than or equal to  .) At some point, all overestimates will be refined, perhaps gradually, perhaps at once, so that once the algorithm has terminated, they are exactly the correct distances.

.) At some point, all overestimates will be refined, perhaps gradually, perhaps at once, so that once the algorithm has terminated, they are exactly the correct distances.

Relaxation

There are theoretically many ways to refine overestimates but a specific way, known as relaxation, is used in all the algorithms discussed in this article. Relaxation can take place when three conditions are met:

- The currently best overestimate for the distance from some vertex

to some vertex

to some vertex  is

is  ;

; - The currently best overestimate for the distance from

to some vertex

to some vertex  is

is  ;

; - The currently best overestimate for the distance from

to

to  is greater than

is greater than  . (This includes the case in which it is infinite.)

. (This includes the case in which it is infinite.)

Relaxation refines the best overestimate for the distance from  to

to  by setting it to

by setting it to  , which is better than its current value.

, which is better than its current value.

Theorem: Relaxation will never strictly underestimate the distance from  to

to  .

.

Proof: Concatenating the shortest paths from  to

to  and

and  to

to  gives a path from

gives a path from  to

to  with length less than or equal to

with length less than or equal to  . Therefore

. Therefore  is an overestimate.

is an overestimate.

Theorem: In a graph with no cycles of negative weight, relaxation is impossible when all distances are correctly known.

Proof: Using the variables defined above, this implies that the distance from  to

to  is in fact greater than the sum of the distances from

is in fact greater than the sum of the distances from  to

to  and

and  to

to  , which is impossible, as we can merely concatenate shortest paths from

, which is impossible, as we can merely concatenate shortest paths from  to

to  and

and  to

to  to obtain a path from

to obtain a path from  to

to  of

of  , which is less.

, which is less.

Theorem: